hello, i have an openstack in use … recently i tried to insert another host, but when it was in the process a machine got stuck in the model and the connection to maas (credential) was lost, i’ve tried almost everything here and nothing, they manage to get me help … I would not like to destroy the model and start all over again

Welcome @marcosgilberto

If you’ve lost the maas API key you should just be able to create a new one (assuming the maas server is still functional) and update the juju credential

Sounds somewhat similar to a problem @anastasia-macmood helped me out with a couple months back in this thread

Thank you for your help.

I already tried to put another credential, but I have a machine that is stuck.

I’ve tried to remove it with the commands:

juju remove-machine --force --no-wait. even so it does not disappear.

I will put some pictures.

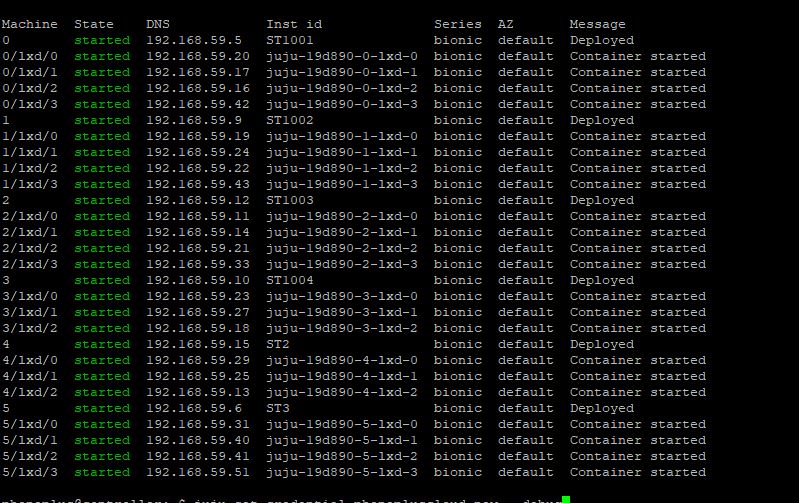

Machine State DNS Inst id Series AZ Message

0 started 192.168.59.5 ST1001 bionic default Deployed

0/lxd/0 started 192.168.59.20 juju-19d890-0-lxd-0 bionic default Container started

0/lxd/1 started 192.168.59.17 juju-19d890-0-lxd-1 bionic default Container started

0/lxd/2 started 192.168.59.16 juju-19d890-0-lxd-2 bionic default Container started

0/lxd/3 started 192.168.59.42 juju-19d890-0-lxd-3 bionic default Container started

1 started 192.168.59.9 ST1002 bionic default Deployed

1/lxd/0 started 192.168.59.19 juju-19d890-1-lxd-0 bionic default Container started

1/lxd/1 started 192.168.59.24 juju-19d890-1-lxd-1 bionic default Container started

1/lxd/2 started 192.168.59.22 juju-19d890-1-lxd-2 bionic default Container started

1/lxd/3 started 192.168.59.43 juju-19d890-1-lxd-3 bionic default Container started

2 started 192.168.59.12 ST1003 bionic default Deployed

2/lxd/0 started 192.168.59.11 juju-19d890-2-lxd-0 bionic default Container started

2/lxd/1 started 192.168.59.14 juju-19d890-2-lxd-1 bionic default Container started

2/lxd/2 started 192.168.59.21 juju-19d890-2-lxd-2 bionic default Container started

2/lxd/3 started 192.168.59.33 juju-19d890-2-lxd-3 bionic default Container started

3 started 192.168.59.10 ST1004 bionic default Deployed

3/lxd/0 started 192.168.59.23 juju-19d890-3-lxd-0 bionic default Container started

3/lxd/1 started 192.168.59.27 juju-19d890-3-lxd-1 bionic default Container started

3/lxd/2 started 192.168.59.18 juju-19d890-3-lxd-2 bionic default Container started

4 started 192.168.59.15 ST2 bionic default Deployed

4/lxd/0 started 192.168.59.29 juju-19d890-4-lxd-0 bionic default Container started

4/lxd/1 started 192.168.59.25 juju-19d890-4-lxd-1 bionic default Container started

4/lxd/2 started 192.168.59.13 juju-19d890-4-lxd-2 bionic default Container started

5 started 192.168.59.6 ST3 bionic default Deployed

5/lxd/0 started 192.168.59.31 juju-19d890-5-lxd-0 bionic default Container started

5/lxd/1 started 192.168.59.40 juju-19d890-5-lxd-1 bionic default Container started

5/lxd/2 started 192.168.59.41 juju-19d890-5-lxd-2 bionic default Container started

5/lxd/3 started 192.168.59.51 juju-19d890-5-lxd-3 bionic default Container started

17 stopped 192.168.59.44 Linux01 bionic default Deployed

17/lxd/0 pending pending bionic

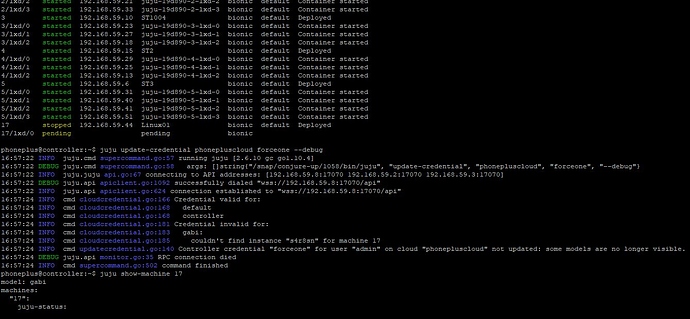

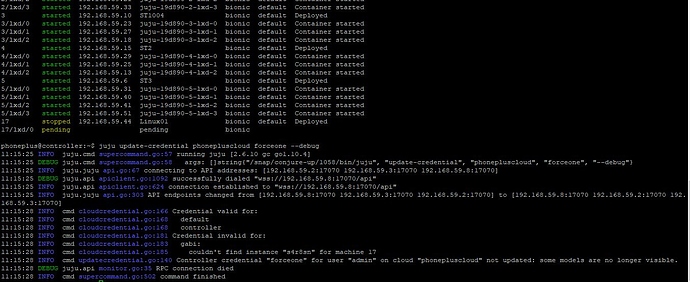

phoneplus@controller:~$ juju update-credential phonepluscloud forceone --debug

16:57:22 INFO juju.cmd supercommand.go:57 running juju [2.6.10 gc go1.10.4]

16:57:22 DEBUG juju.cmd supercommand.go:58 args: []string{"/snap/conjure-up/1058/bin/juju", "update-credential", "phonepluscloud", "forceone", "--debug"}

16:57:22 INFO juju.juju api.go:67 connecting to API addresses: [192.168.59.8:17070 192.168.59.2:17070 192.168.59.3:17070]

16:57:22 DEBUG juju.api apiclient.go:1092 successfully dialed "wss://192.168.59.8:17070/api"

16:57:22 INFO juju.api apiclient.go:624 connection established to "wss://192.168.59.8:17070/api"

16:57:24 INFO cmd cloudcredential.go:166 Credential valid for:

16:57:24 INFO cmd cloudcredential.go:168 default

16:57:24 INFO cmd cloudcredential.go:168 controller

16:57:24 INFO cmd cloudcredential.go:181 Credential invalid for:

16:57:24 INFO cmd cloudcredential.go:183 gabi:

16:57:24 INFO cmd cloudcredential.go:185 couldn't find instance "s4r8sn" for machine 17

16:57:24 INFO cmd updatecredential.go:140 Controller credential "forceone" for user "admin" on cloud "phonepluscloud" not updated: some models are no longer visible.

16:57:24 DEBUG juju.api monitor.go:35 RPC connection died

16:57:24 INFO cmd supercommand.go:502 command finished

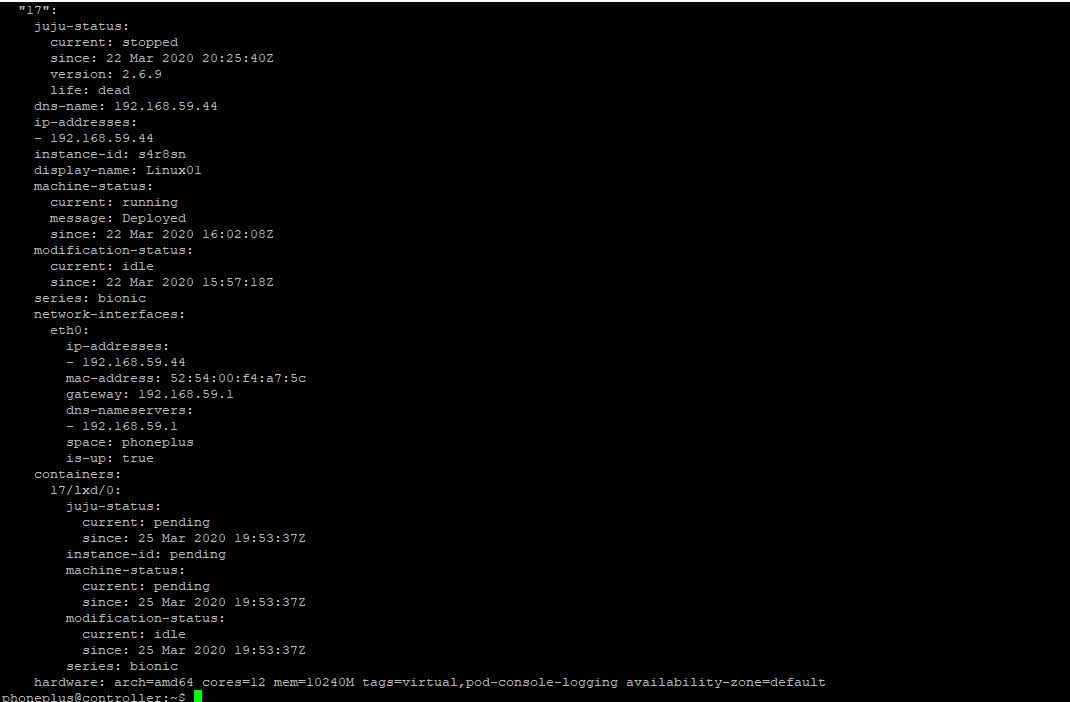

phoneplus@controller:~$ juju show-machine 17

model: gabi

machines:

"17":

juju-status:

current: stopped

since: 22 Mar 2020 20:25:40Z

version: 2.6.9

life: dead

dns-name: 192.168.59.44

ip-addresses:

- 192.168.59.44

instance-id: s4r8sn

display-name: Linux01

machine-status:

current: running

message: Deployed

since: 22 Mar 2020 16:02:08Z

modification-status:

current: idle

since: 22 Mar 2020 15:57:18Z

series: bionic

network-interfaces:

eth0:

ip-addresses:

- 192.168.59.44

mac-address: 52:54:00:f4:a7:5c

gateway: 192.168.59.1

dns-nameservers:

- 192.168.59.1

space: phoneplus

is-up: true

containers:

17/lxd/0:

juju-status:

current: pending

since: 25 Mar 2020 19:53:37Z

instance-id: pending

machine-status:

current: pending

since: 25 Mar 2020 19:53:37Z

modification-status:

current: idle

since: 25 Mar 2020 19:53:37Z

series: bionic

hardware: arch=amd64 cores=12 mem=10240

I’ve been through this situation before … but I was just testing the solution. Now I’m already in production, I already have some systems running. I can’t just delete everything and start over. In my case, it seems, the controller doesn’t find machine 17 in my cloud. and that’s what made her lose her credential. even though I created another machine with the same name it didn’t work. I entered the maas database and authored the machine ID in order to try to trick the controller … even so it didn’t work.

does anyone know if i can edit the configuration file of this machine? I believe that if you can tell the system that she is dead. it will automatically delete it.

if all else fails, do you know how to upload images and snapshot straight from openstack?

Hello again . I finally managed to solve this problem.

first: I don’t know if it influenced, I went to hypervisor on the horizon panel and evacuated the stopped hosts. after that when running the command “juju update-credential phonepluscloud forceone --debug” I realized that the answer changed, he asked if he wanted to apply the client, on the controller or both.

second: I created a new credential for the cloud with the command: “juju add-credential phonepluscloud”

third: I applied the new credential: “juju set-credential phonepluscloud new --debug”

and finally everything worked out